AI Litigation Is No Longer “Coming.” It’s Already Here.

AI is no longer a future legal risk—it is already reshaping courtrooms worldwide, and businesses without provable AI governance are about to learn the difference between preparation and panic

Bob McTaggart edited with AI

1/26/20262 min read

AI Litigation Is No Longer “Coming.” It’s Already Here.

For most of 2023 and 2024, AI litigation was treated as something abstract—academic debates about copyright, fairness, and innovation. Interesting, but distant.

That phase is over.

By early 2026, AI has become a routine fact pattern in court filings. Not always the headline, but increasingly the mechanism behind harm, dispute, or alleged negligence.

There is no single public counter that says “X AI cases this week.” Courts don’t work that way. But when you triangulate litigation trackers, law-firm forecasts, sanctions orders, and regulatory actions, a consistent picture emerges:

AI-related litigation has shifted from isolated landmark cases into a steady, global case load.

What “This Week” Actually Looks Like

Current AI litigation activity generally falls into three categories:

1. Foundational model disputes

Large copyright and data-use cases continue grinding through discovery and motions. Their importance lies less in speed and more in precedent. Once decided, follow-on litigation will be immediate.

2. Professional misconduct and sanctions

Courts are now sanctioning lawyers and professionals for AI-generated hallucinations cited as fact. What began as novelty mistakes has become a documented pattern appearing directly in court records.

3. Ordinary cases with AI underneath

Employment screening, algorithmic pricing, biometric tracking, voice fraud, deceptive AI assistants. These cases don’t always call themselves “AI lawsuits,” but AI is central to the alleged harm.

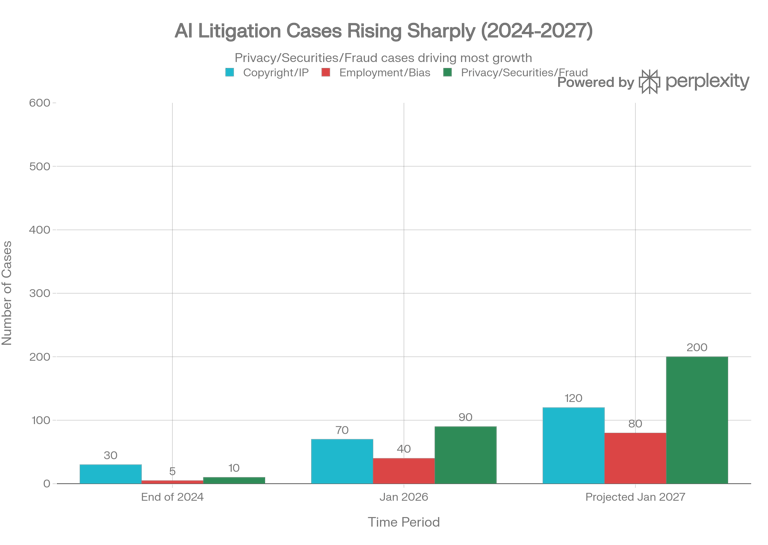

If you define an AI legal case as any matter where AI training, outputs, or AI-assisted conduct are pleaded facts, the global active docket now sits in the low hundreds. Only a subset move each week—but something moves every week.

This Week vs. Last Year

At this time last year, AI litigation revolved around a short list of marquee disputes. Most summaries referenced perhaps a dozen major cases.

Today, there are two to three times as many notable matters, spanning more legal categories, plus a growing tail of smaller but telling disputes. This growth isn’t hype. It’s structural.

AI moved from experimental to operational. Courts follow operations.

What Next Year Likely Brings

Looking one year ahead, a conservative projection points to 50–100% growth in active AI-salient disputes as regulations mature and plaintiffs’ firms specialize.

That suggests several hundred—potentially five hundred—active AI-related matters worldwide by early 2027, with dozens of meaningful court events every week.

The exact trajectory depends on:

• How foundational copyright cases resolve

• How aggressively regulators enforce new AI statutes

• Whether courts formalize doctrines around AI agency and vendor liability

But raw case counts miss the deeper shift.

The Governance Reality Courts Care About

Three trends matter more than volume:

Evidence expectations

Courts are converging on requirements for disclosure of AI use, proof of human oversight, and limits on AI-generated expert material. System-generated logs are becoming first-order evidence.

Agency and vendor liability

As AI systems are treated as agents of the user, organizations unable to demonstrate disciplined governance inherit the same liability exposure as their vendors.

Insurability

Insurers increasingly tie coverage to governance maturity. No documented controls. No explainability. No auditable trail. No coverage.

Many organizations believe they are “doing AI governance” because they save screenshots, keep Word files, and write policies. In litigation, that doesn’t signal diligence. It signals improvisation.

The Tsunami Is a Process, Not a Moment

Litigation tsunamis don’t arrive as walls of water.

They arrive as precedent, followed by expectation, followed by enforcement.

By the time the wave is visible, it’s already too late to build a boat.

The organizations that survive won’t be the ones with the best explanations. They’ll be the ones with provable, contemporaneous, defensible evidence of how AI was actually used.

That’s the life raft.

And right now, the water is already pulling back.

Author:

Bob McTaggart

Veteran-led AI Governance & Trust Infrastructure

Edited with AI

#ai #riskmanagement #trustedbyheroesdotcom

Supporting

Getting Veterans and First Responders back on mission.!

Veteran-inspired AI Governance & Trust Infrastructure

Trusted by Heroes and Mounted Rifles Management

Veterans and First Responders receive direct support through SupportOurHeroes.Directory

Leadership and peer support are taught through RedFridayTalks.Help

The same governance protections are available to everyone.

© 2026. All rights reserved.