Good Faith Compliance

Fast, Practical AI Governance for Small Business, Public Trust, and Responsible AI Use

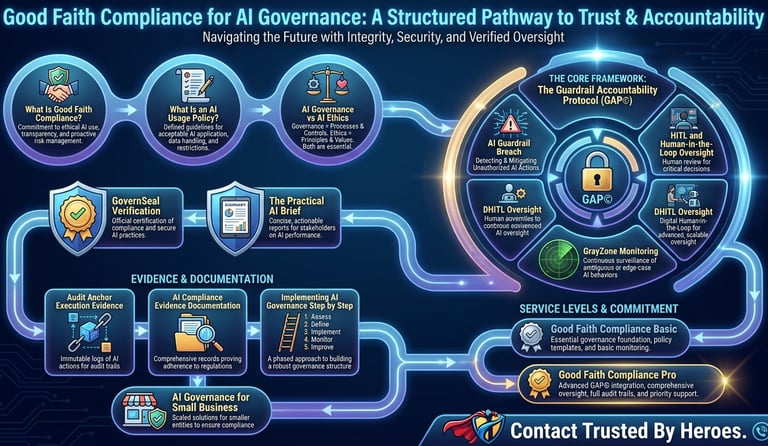

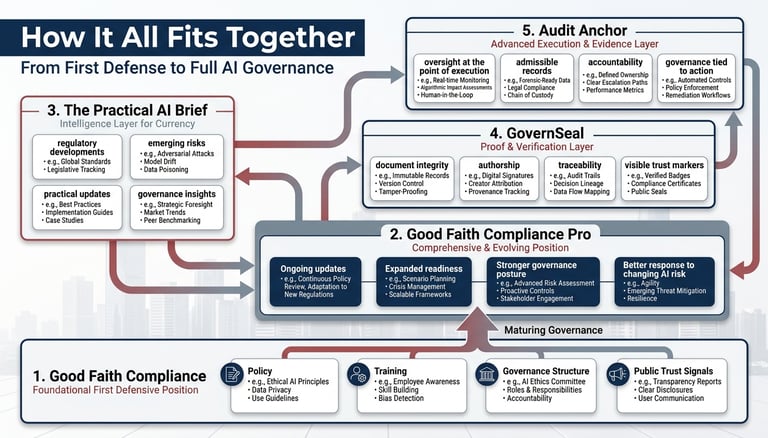

The Best First Step: Good Faith Compliance

The fastest and easiest first step is Good Faith Compliance.

If your organization is already using AI, or if your people may be using AI without clear rules, Good Faith Compliance gives you a practical starting point that can be put in place quickly.

It creates a first defensive position: policy, training, oversight expectations, verification, documentation, and evidence of good-faith effort.

For many small businesses, law firms, nonprofits, employers, and public-trust organizations, this first step can address a major share of immediate AI governance and liability-management needs.

We estimate that Good Faith Compliance can address approximately 85% of the immediate AI governance, AI guidance, and liability-management concerns most small organizations are facing right now.

That does not mean the work is finished.

It means the organization is no longer standing there with nothing.

It has a position.

It has rules.

It has training.

It has evidence.

It has a better answer if a regulator, insurer, client, employee, board, funder, or member of the public asks:

“What did you do to govern AI use?”

The answer should not be:

“We were thinking about it.”

The answer should be:

“We saw the risk, created rules, trained our people, required oversight, and documented reasonable care.”

That is what Good Faith Compliance is built to do.

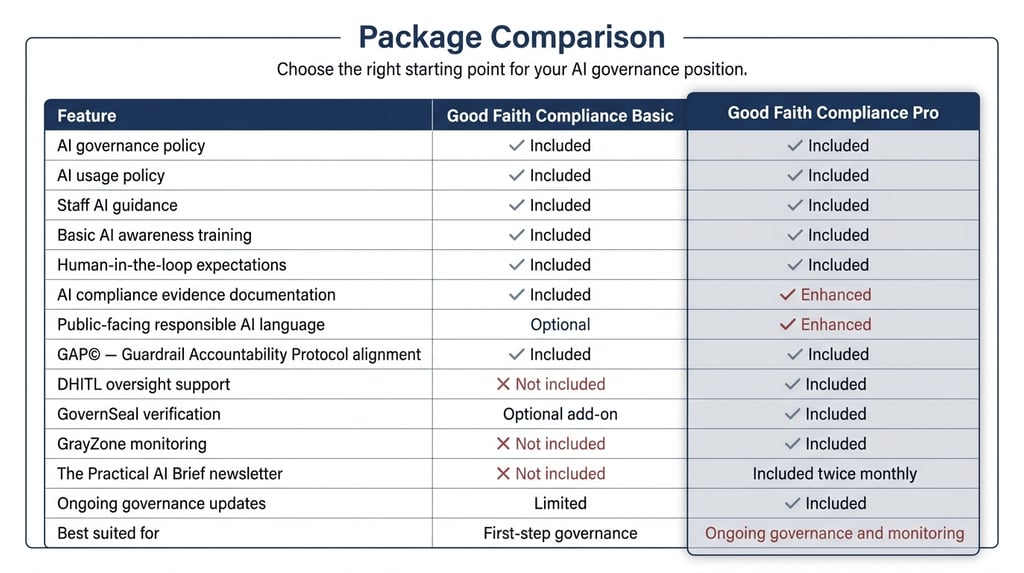

For a More Comprehensive Start: Good Faith Compliance Pro

For organizations that want a stronger starting position, Good Faith Compliance Pro adds ongoing support, monitoring, verification, intelligence, and oversight.

Good Faith Compliance Basic gives you the first defense.

Good Faith Compliance Pro helps keep that defense current.

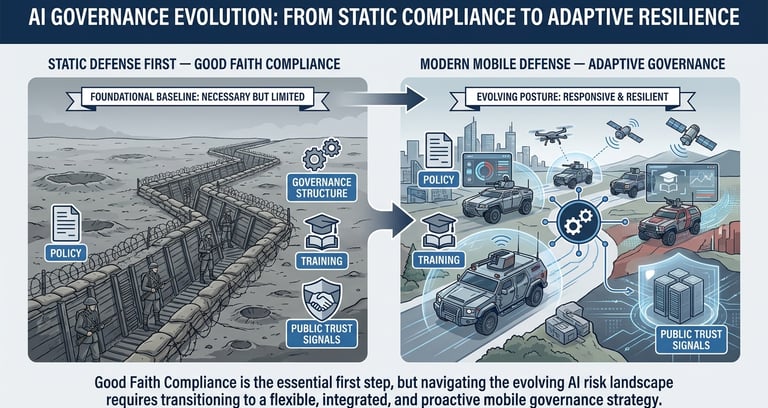

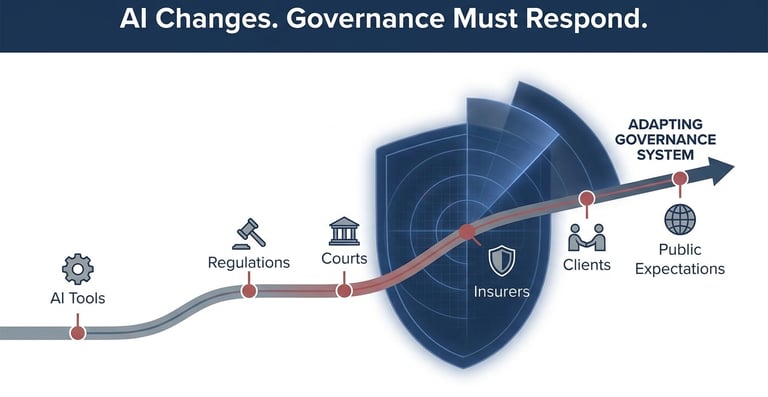

That matters because AI governance is not static.

AI tools change.

Employee behavior changes.

Regulations change.

Court expectations change.

Professional guidance changes.

Client questions change.

Insurance standards change.

Public expectations change.

A static first position is important, but it cannot stay static forever.

As the AI environment changes, your compliance and liability-management system needs to respond.

More importantly, it needs to anticipate.

Good Faith Compliance Pro is designed for organizations that want to move beyond the first step and maintain a stronger, more adaptive position through:

DHITL oversight support

GovernSeal verification

GrayZone monitoring

The Practical AI Brief, delivered twice per month

ongoing governance updates

enhanced AI compliance evidence documentation

stronger public-facing trust language

AI risk awareness and prevention support

accountability protocol alignment

Good Faith Compliance is the fast first move.

Good Faith Compliance Pro is the stronger continuing position.

Why Acting Now Matters

AI is already inside organizations.

It is being used in emails, reports, summaries, research, marketing, hiring support, client communication, internal planning, compliance work, document review, and decision support.

Some of that use is approved.

Some of it is not.

That is why AI governance for small business matters now.

The real question is no longer:

“Will our organization use AI?”

The real question is:

“Can we show that AI is being used with policy, oversight, verification, documentation, and human accountability in place?”

Good Faith Compliance helps your organization move quickly from exposure to structure.

It is not a claim of perfection.

It is a defensible starting position.

It is better to act sooner by choice than later through a regulator, insurer, client complaint, employment dispute, privacy incident, AI error, or loss of public confidence.

Close the GAP before AI creates exposure.

Our Position

Trusted By Heroes has been early on the direction of AI governance, AI guidance, shadow AI, human oversight, documentation, and public trust.

To this point, our concerns have not been theoretical.

They have been proven right by the direction of the market, regulators, professional bodies, courts, insurers, and the public conversation around responsible AI use.

We are happy to help organizations start now, strengthen their position, and avoid waiting until pressure forces the conversation.

The best move is not panic.

The best move is not delay.

The best move is to put a practical first defense in place, then improve it as the environment changes.

That is the purpose of Good Faith Compl “Who We Are and How We Do What We Do”

Before you decide whether Good Faith Compliance is right for your organization, hear directly from Bob McTaggart, founder of Trusted By Heroes.

In this short video, Bob explains who we are, why Trusted By Heroes was built, and how we approach AI governance differently.

We are not selling AI tools.

We are helping organizations put structure, oversight, verification, and evidence around AI use before unmanaged AI becomes a legal, regulatory, operational, employment, insurance, or public-trust problem.

Good Faith Compliance

Good Faith Compliance is built for organizations that know AI is already being used and need a practical first step: policy, training, human oversight, verification, documentation, and a defensible record of reasonable care.

What Is Good Faith Compliance?

Good Faith Compliance is a practical AI governance package for organizations that need structure without unnecessary complexity.

It helps establish:

AI governance policy

AI usage policy

staff AI guidance

human-in-the-loop oversight

DHITL review principles

AI compliance evidence documentation

GovernSeal verification

public-facing trust language

governance improvement roadmap

certificate-style documentation of reasonable governance steps

The purpose is simple:

Show that the organization recognized AI risk, created rules, trained people, required oversight, verified key records, and documented reasonable care.

Good Faith Compliance does not pretend AI risk is solved.

It creates a clear, responsible, evidence-backed first position.

What Is an AI Usage Policy?

An AI usage policy is a plain-language rule set that explains how people inside an organization may use AI tools.

It should answer:

Who may use AI?

Which AI tools are approved?

What information must never be entered into AI?

What tasks are allowed?

What tasks are prohibited?

What requires human review?

When must AI use be escalated?

Who owns the final decision?

What records must be kept?

Without an AI usage policy, people guess.

When people guess, risk grows.

Good Faith Compliance replaces guessing with clear AI guidance.

AI Governance vs. AI Ethics

There is a difference between AI governance and AI ethics.

AI ethics speaks to values:

Is this fair?

Is this responsible?

Is this transparent?

Should AI be used this way?

AI governance turns those values into operating controls:

What policy exists?

Who is responsible?

What oversight is required?

What evidence is kept?

What happens when risk appears?

Who has authority to stop the action?

Ethics explains what an organization believes.

Governance shows what the organization actually did.

Good Faith Compliance is built around governance, not empty statements.

Why AI Governance Matters Now

AI rules, expectations, and liability standards are moving quickly.

Professional bodies, regulators, courts, insurers, clients, employers, and the public are all asking harder questions about how AI is being used, who is supervising it, what information is being exposed, and whether organizations can prove they acted responsibly.

For law firms, ABA guidance has already made AI use a professional responsibility issue involving competence, confidentiality, client communication, supervision, verification, and human review. AI is no longer just a technology decision. It is becoming a governance, ethics, and liability issue.

For organizations exposed to European markets or global clients, EU AI expectations are already shaping how businesses think about risk classification, transparency, documentation, human oversight, and accountability. Even organizations outside Europe are being pulled toward higher standards because customers, partners, vendors, and insurers are starting to expect proof.

In the United States, the situation is active but unsettled. Federal agencies, state governments, courts, bar associations, employers, and industry regulators are all moving at different speeds. That creates risk because businesses may not have one single rulebook, but they are still expected to show reasonable care, good-faith governance, and defensible decision-making.

Canada is moving in the same direction, with growing pressure around privacy, automated decision-making, workplace use, public trust, and responsible AI adoption. The legal landscape is still developing, but waiting for perfect clarity is not a safe strategy.

That is the real issue.

AI governance now matters because the environment is turbulent, fragmented, and changing faster than most organizations can comfortably manage. A static policy is a start, but it will not be enough forever. Organizations need a defensible first position now, and a system that can evolve as regulation, liability, technology, and public expectations continue to change.

For employers, AI enforcement risks for employers are growing around hiring, workplace decisions, privacy, employee data, discrimination, and automated decision support.

The direction is clear:

AI use must be governed, documented, and kept under human accountability.

Good Faith Compliance helps organizations prepare for that reality with a practical first step.

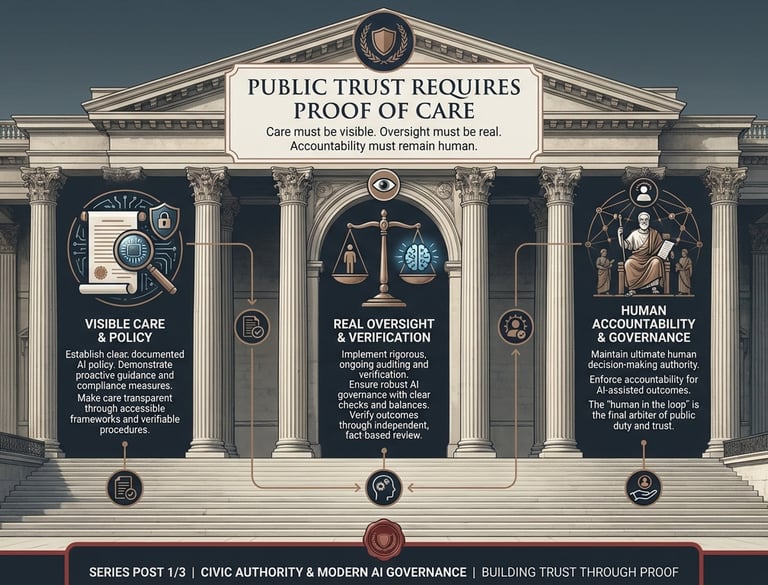

Why Public Trust Matters

Rory Cory, a highly respected military museum director, summarized the issue clearly:

Why Public Trust Matters

“Museums operate in the public trust. Like courts, post offices, and schools, we are expected to meet a higher standard of care, accuracy, and accountability. Good-Faith Compliance creates a clear defensive position by showing we took reasonable steps to govern AI use before problems arise. It puts policy, oversight, verification, and documentation in place. This reduces liability exposure and reassures the public that AI is being used responsibly. It also sets the right example for safe, controlled, and accountable AI use.

That public trust standard applies beyond museums.

It applies to small businesses, law firms, nonprofits, schools, associations, employers, professional firms, first responder organizations, veteran organizations, public-facing institutions, and any group people rely on.

The public trust mantra is simple:

Care must be visible. Oversight must be real. Accountability must remain human.

AI does not remove responsibility.

It raises the need for structure.

It applies to small businesses, law firms, nonprofits, schools, associations, employers, professional firms, first responder organizations, veteran organizations, public-facing institutions, and any group people rely on."

The public trust mantra is simple:

Care must be visible. Oversight must be real. Accountability must remain human.

AI does not remove responsibility.

It raises the need for structure.

Reference: Our AI Governance Policy

TrustedByHeroes.com operates under an AI Governance Policy that reflects a good-faith governance posture.

The policy is built around:

Responsible AI use

Transparency

Human accountability

Human-in-the-loop oversight

Data responsibility

AI inventory

Risk classification

Review and validation

Escalation protocols

Evidence and documentation

Continuous improvement

The policy includes the core operating principle:

Oversight must have authority at the point of execution. If it cannot act, it is not governance.

Good Faith Compliance turns that principle into a usable package for organizations that need to start now.

How the Pieces Work Together

What the Good Faith Compliance Package Includes

1. AI Governance Policy

The AI governance policy defines the organization’s responsible AI position.

It establishes:

acceptable AI use

prohibited AI use

human review requirements

data responsibility

risk classification

escalation rules

documentation expectations

continuous improvement

This policy is the foundation for responsible AI use across operations, communication, and decision-making.

2. AI Usage Policy and Staff Guidance

The AI usage policy turns governance into practical, day-to-day direction.

It helps staff understand:

what they can do with AI

what they must not do

what information is restricted

when to verify AI output

when to disclose or escalate AI use

when human review is required

who is accountable for final decisions

Without clear guidance, people guess.

When people guess, risk grows.

Good Faith Compliance replaces guessing with clear, usable rules.

3. Training and Awareness

Policy alone is not enough.

Good Faith Compliance includes practical awareness training to help staff recognize:

hallucinations and output errors

confidentiality and privacy risks

bias and overreliance

unmanaged or “shadow AI” use

public-facing and employment-related risks

when to involve human review

when to escalate concerns

The goal is not to create AI experts.

The goal is to help people use AI safely, responsibly, and with awareness.

Training becomes the first operational defense.

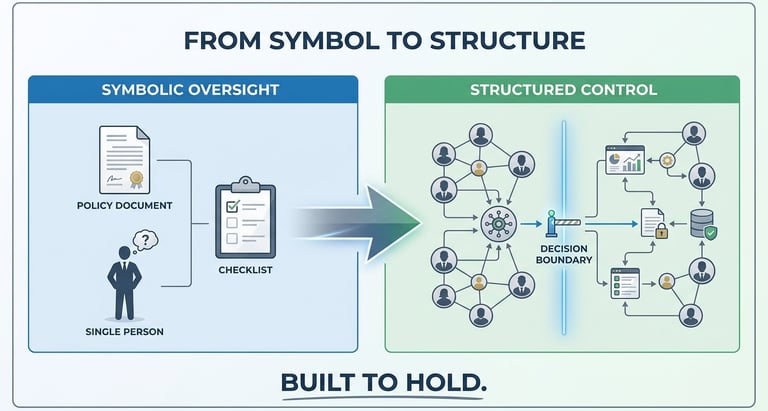

4. Human-in-the-Loop (HITL) Oversight

Human oversight must be real, not symbolic.

A person reviewing AI output must have the authority to:

review and verify

challenge or correct

stop or escalate

approve before action

Good Faith Compliance defines:

what requires human review

who performs it

when it must occur

what must be verified

who remains accountable

The rule is simple:

Human presence without authority is not governance.

5. DHITL — Distributed Human Oversight

Trusted By Heroes applies a stronger model:

DHITL — Distributed Human-in-the-Loop oversight.

AI risk cannot sit with one person.

Oversight must be structured across:

defined roles

clear review points

escalation pathways

peer support

training

authority at the point of execution

DHITL defines:

who reviews AI outputs

what work requires review

when secondary review is needed

when escalation is required

who can halt or override decisions

what must be documented

This reflects real-world operational environments where performance depends on structure, support, and clear authority—not individual guesswork.

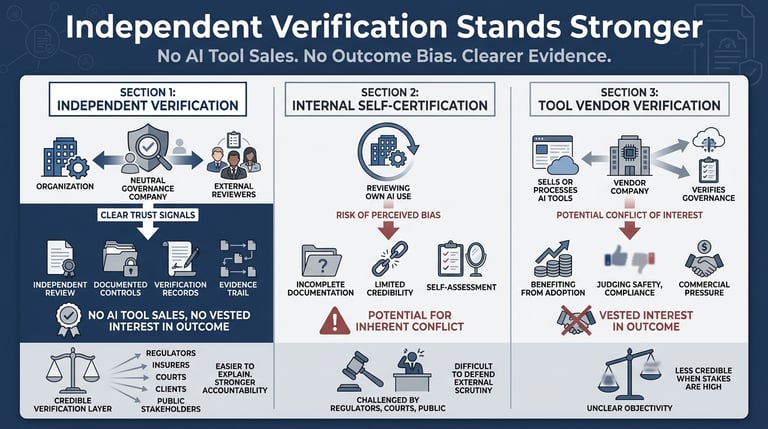

6. GovernSeal Verification

GovernSeal strengthens the evidence layer.

It provides verifiable records for governance documents and actions, including:

document verification

authorship and ownership records

version control

certificate-style proof lines

public-facing verification language

proof a document existed at a specific point in time

This shifts the organization from:

“We had a policy somewhere.”

to:

“Here is the verified governance record.”

7. AI Compliance Evidence Documentation

Governance must be supported by evidence.

Documentation may include:

policy versions

staff acknowledgments

training records

AI use inventories

review and decision logs

escalation records

governance statements

GovernSeal verification records

certificate-style completion records

The principle is simple:

If you cannot show it, it may not help you.

8. Certificate-Style Governance Support

Good Faith Compliance can produce structured, certificate-style documentation that shows:

governance steps were defined

training was delivered

oversight expectations were set

evidence was created and maintained

This does not claim full compliance.

It shows that the organization took reasonable, documented, good-faith steps to govern AI use before problems arise.

Good Faith Compliance may include third-party certificate-style support showing that the organization completed a baseline AI governance package.

This is not regulatory approval.

It is not legal advice.

It is not a guarantee.

It is a structured record showing that the organization took reasonable steps toward AI governance.

This may be useful for:

Clients

Boards

Regulators

Insurers

Employers

Public-facing reassurance

Professional partners

Community stakeholders

For many organizations comparing the best AI compliance programs for SMBs, the most useful first step is not a massive enterprise platform. It is a practical package that creates policy, oversight, verification, and documentation.

9. Audit Anchor Execution Layer

Audit Anchor is the stronger execution-layer component of the broader Trusted By Heroes governance system.

Where Good Faith Compliance creates the first-step policy, training, oversight, and documentation baseline, Audit Anchor is designed to strengthen evidence at the point where AI-assisted output becomes action.

Audit Anchor supports the principle:

Oversight must have authority at the point of execution.

It is intended to help organizations move beyond after-the-fact policy and toward stronger evidence at the point of decision, action, review, or approval.

In simple terms:

Good Faith Compliance creates the governance baseline.

GovernSeal supports proof and verification of governance records.

DHITL defines human authority and review.

Audit Anchor strengthens evidence at the point of action.

10. Public-Facing Trust Language

Some organizations need internal governance only.

Others also need public-facing reassurance.

Good Faith Compliance can help create plain-language public statements such as:

Responsible AI use statement

AI governance commitment

Public trust statement

Human oversight statement

Data responsibility statement

Good Faith Compliance participation statement

This is especially useful for organizations that operate in the public trust.

Public-facing language must be careful.

It should not overclaim.

It should not imply regulatory approval.

It should not suggest AI is risk-free.

It should say what matters:

We have taken reasonable steps to govern AI use through policy, oversight, verification, documentation, and human accountability.

Below is a shortened, ordered, copy-paste version with duplication removed and the “guardrail/GAP” language eliminated, based on your section.

Good Faith Compliance Packages

Good Faith Compliance is designed to meet organizations where they are.

Some organizations need a simple first step. Others need ongoing monitoring, stronger oversight, verification, and continuing governance intelligence.

Good Faith Compliance Basic

Good Faith Compliance Basic is for organizations that need a practical AI governance starting point.

It helps establish:

AI governance policy

AI usage policy

staff AI guidance

basic AI awareness training

human-in-the-loop expectations

AI risk classification

public-facing responsible AI language, where appropriate

good-faith governance position statement

Good Faith Compliance Basic creates the first defensible position by helping answer one critical question:

What did your organization put in place to govern AI use before problems arose?

Good Faith Compliance Pro

Governance, Oversight, Monitoring, Verification, and Intelligence

Good Faith Compliance Pro is for organizations that need more than a starting policy.

It includes everything in Good Faith Compliance Basic, plus:

DHITL oversight support

GovernSeal verification

GrayZone monitoring

The Practical AI Brief, delivered twice per month

ongoing AI governance updates

enhanced AI compliance evidence documentation

stronger public-facing trust language

deeper oversight and accountability support

Good Faith Compliance Basic creates the starting point.

Good Faith Compliance Pro helps keep that position current.

That matters because AI governance is not a one-time project. Tools change. Staff behavior changes. Regulations change. Client expectations change. Insurance standards change. Public trust expectations change.

Good Faith Compliance Pro helps organizations stay prepared before unmanaged AI use creates exposure.

GFC Pro Components

DHITL Oversight Support

DHITL — Distributed Human-in-the-Loop oversight — strengthens the human review layer.

It helps ensure AI oversight is not symbolic. A human in the loop must have authority to review, question, stop, correct, or escalate before AI output becomes action.

DHITL helps define:

who reviews AI outputs

what must be reviewed

when escalation is required

who has halt authority

what must be documented

how oversight responsibility is distributed

The principle is simple:

Oversight must have authority at the point of execution. If it cannot act, it is not governance.

GovernSeal Verification

GovernSeal supports the proof layer.

It helps create verifiable records for key governance documents, policies, statements, and compliance materials.

GovernSeal may support:

document verification

authorship and ownership records

version control

certificate-style proof lines

public-facing verification language

evidence that a governance document existed at a point in time

This helps move the organization from:

“We had a policy somewhere.”

to:

“Here is the verified governance record.”

GrayZone Monitoring

GrayZone monitoring supports ongoing governance visibility.

AI risk does not stay fixed after a policy is written. Tools change. Staff behavior changes. Regulations change. Professional guidance changes. Public expectations change. Client questions change. Insurance expectations change.

GrayZone helps track governance exposure signals and identify where the organization may need to strengthen its position.

It may support review around:

AI governance maturity

unmanaged AI use

policy gaps

documentation gaps

oversight weaknesses

public-facing AI exposure

employer AI risk

professional guidance changes

regulatory pressure

governance readiness indicators

GrayZone monitoring is not a guarantee that every risk is eliminated. It is an early-warning and visibility layer that helps leadership see where attention is needed.

The Practical AI Brief

Good Faith Compliance Pro includes The Practical AI Brief, delivered twice per month.

This briefing is built for practical leaders, not AI hype followers.

It covers:

AI governance developments

AI compliance trends

professional guidance

EU AI and regulatory pressure

employer AI risk

unmanaged AI use

HITL and DHITL oversight

useful AI tools and practical use cases

risk mitigation

product comparisons

safe AI integration practices

predictions and early warning signals

practical steps organizations can take now

The Practical AI Brief keeps organizations informed, current, and better prepared as the AI governance environment matures.

Implementing AI Governance Step by Step

Step 1: Identify AI Use

Find out where AI is already being used.

This may include internal work, public communication, HR, marketing, client service, research, drafting, customer support, and decision support.

Step 2: Classify Risk

Separate low-risk, moderate-risk, and high-risk use cases.

Low risk: internal brainstorming, formatting, general research support

Moderate risk: operational content, client-facing material, reports

High risk: legal, financial, employment, safety, privacy, or decision-impacting use

Put written rules in place.

Step 3: identify usage

Define approved use, restricted use, prohibited use, human review requirements, escalation triggers, and documentation expectations.

Step 4: Train People

Make sure staff understand the rules and risks.

Training should be practical, plain-language, and tied to real work.

Step 5: Define Human Oversight

Identify when human review is required.

Make sure the human in the loop has authority to stop, correct, approve, or escalate.

Step 6: Verify and Document

Create evidence that reasonable care was taken.

This may include policy records, training records, acknowledgments, oversight records, escalation records, and GovernSeal verification.

Step 7: Improve Over Time

Update the governance program as AI tools, regulations, client expectations, insurance standards, and risk conditions change.

What Good Faith Compliance Helps Reduce

Good Faith Compliance helps reduce exposure related to:

unmanaged or informal AI use

unclear employee AI use

weak AI guidance

missing AI usage policies

confidentiality mistakes

privacy risk

AI hallucinations

unverified public-facing content

employment-related AI risk

weak human review

missing AI compliance evidence

unclear accountability

inability to show reasonable care

loss of public confidence after unmanaged AI use

It does not eliminate every risk. Nothing does.

But it creates a stronger position than doing nothing, relying on informal habits, or waiting until a problem occurs.

Who This Is For

Good Faith Compliance is designed for organizations that need AI governance without unnecessary complexity.

It is especially relevant for:

small businesses

law firms

accounting firms

museums

schools

nonprofits

public-trust organizations

professional service firms

associations

veteran organizations

first responder organizations

employers using AI in workplace operations

organizations preparing for client, insurer, or regulator questions

This is a practical starting point for organizations that know AI is useful but understand unmanaged AI creates exposure.

What This Is Not

Good Faith Compliance is not:

legal advice

regulatory approval

a guarantee against liability

a replacement for professional judgment

a substitute for legal, privacy, employment, or compliance counsel

a claim that AI use is risk-free

a one-time checkbox

It is a practical governance framework that helps organizations show responsible, documented, good-faith action.

The Trusted By Heroes Standard

Trusted By Heroes operates under a clear AI governance principle:

Oversight must have authority at the point of execution. If it cannot act, it is not governance.

Our approach prioritizes:

structural control over observation

evidence over assumption

accountability over automation

human judgment over blind trust

documentation over memory

public trust over empty claims

practical governance over performative policy

AI can assist.

AI can accelerate.

AI can improve productivity.

But accountability must remain human.

Better Sooner Than Under Pressure

Most organizations will eventually be asked to explain how AI is being used.

The only question is whether they answer from preparation or reaction.

Waiting may mean the trigger comes from a regulator, client complaint, privacy issue, employment dispute, public error, confidentiality failure, insurer question, board review, funder concern, or loss of public confidence.

That is the wrong time to start building governance.

Good Faith Compliance gives your organization the first step: policy, training, oversight, verification, documentation, and human accountability.

Good Faith Compliance Pro adds ongoing oversight, GovernSeal verification, GrayZone monitoring, and The Practical AI Brief twice per month.

Start with a defensible first step.

Then keep it current.

Contact Info@TrustedByHeroes.com to discuss:

Good Faith Compliance Basic

Good Faith Compliance Pro

AI governance for small business

AI usage policy creation

staff AI guidance

HITL and DHITL oversight

GovernSeal verification

GrayZone monitoring

The Practical AI Brief

Audit Anchor execution-layer evidence

AI compliance evidence documentation

public-facing responsible AI trust statements

Better sooner than under pressure.

Supporting

Getting Veterans and First Responders back on mission.!

Veteran-inspired AI Governance & Trust Infrastructure

Trusted by Heroes and Mounted Rifles Management

Veterans and First Responders receive direct support through SupportOurHeroes.Directory

Leadership and peer support are taught through RedFridayTalks.Help

The same governance protections are available to everyone.

© 2026. All rights reserved.