The Clean Sentence Is Often the Dangerous One

Blog post description.

Bob McTaggart edited with ai

5/5/20267 min read

The Clean Sentence Is Often the Dangerous One

Bob McTaggart

I keep AI controlled, compliant, accountable. AI Governance & HITL Compliance for SMBs | EU AI Act Readiness | Workforce AI Oversight Training | BaseState Compliance | Military Veteran-Led Trust Infrastructure

May 4, 2026

Richard Randall, the engineer minded veteran friend w should all have, raised something in conversation that brought me right back to rooms I have been in over the years.

Not theoretical rooms.

Real ones.

Business rooms.

Military rooms.

Technical rooms.

Legal-adjacent rooms.

Rooms where people were trying to figure out what happened, who knew what, what was assumed, what was verified, and whether the decision made sense once the pressure came on.

His point was about lawyers dealing with engineers, scientists, mathematicians, and other technical people.

The core problem is simple enough:

They do not always use the same words the same way.

One of the biggest words is:

fact

That word can sound solid.

But I have learned the hard way that a “fact” is not always the same thing to every person in the room.

To a lawyer, a fact may be something that can be pleaded, tested, weighed, challenged, proven, or accepted under an evidentiary standard.

To an engineer, a fact may mean something much closer to direct verification, known method, known tolerance, known measurement, and known margin of error.

That is a big difference.

And when people miss that difference, trouble starts.

I Have Seen People Talk Past Each Other

I have seen people use the same sentence and walk away believing they agreed.

They did not.

They used the same words, but not the same meaning.

An engineer saying:

“The drawing indicates Plate 4 is 25mm thick at Section AB”

is not saying the same thing as:

“I personally measured Plate 4 at Section AB and confirmed it is 25mm thick.”

Those may sound similar if you are not from that world.

They are not similar.

One means the design document says it should be that way.

The other means the person verified it.

There is another version:

“The inspection report indicates Plate 4 is 25mm thick at Section AB.”

That means something different again.

It may mean somebody measured it.

It may mean the person signed off on the report.

It may mean the person accepts that the report says what it says, but has no independent present recollection of the specific measurement.

Those are not word games.

That is the difference between source, verification, memory, reliance, and responsibility.

I have seen that kind of distinction matter.

I have felt the temperature in the room change when somebody asks:

“Did you actually verify that yourself?”

That question changes everything.

Because now we are no longer talking about whether the sentence sounds true.

We are talking about what kind of truth it is.

This Is Not Just an Engineering Problem

I have seen versions of this in business.

Somebody says:

“The client agreed.”

Then you ask:

Did they sign? Did they say yes on a call? Did someone tell you they agreed? Did they agree to the concept or the exact terms? Did they agree before or after the change? Was that agreement documented?

Those are very different things.

I have seen it in operations.

Somebody says:

“The team was informed.”

Then you ask:

Who was informed? When? By whom? Did they understand? Was acknowledgment required? Did anyone object? Did the instruction change afterward?

Again, very different things.

I have seen it in leadership.

Somebody says:

“We had approval.”

Then under pressure it becomes:

We thought we had approval. We had verbal approval. We had approval for an earlier version. We had approval from someone who may not have had authority. We had approval, but not for execution.

That last one matters.

Approval for discussion is not approval for action.

Approval for draft is not approval for deployment.

Approval yesterday may not survive a changed condition today.

This is why I pay so much attention to execution.

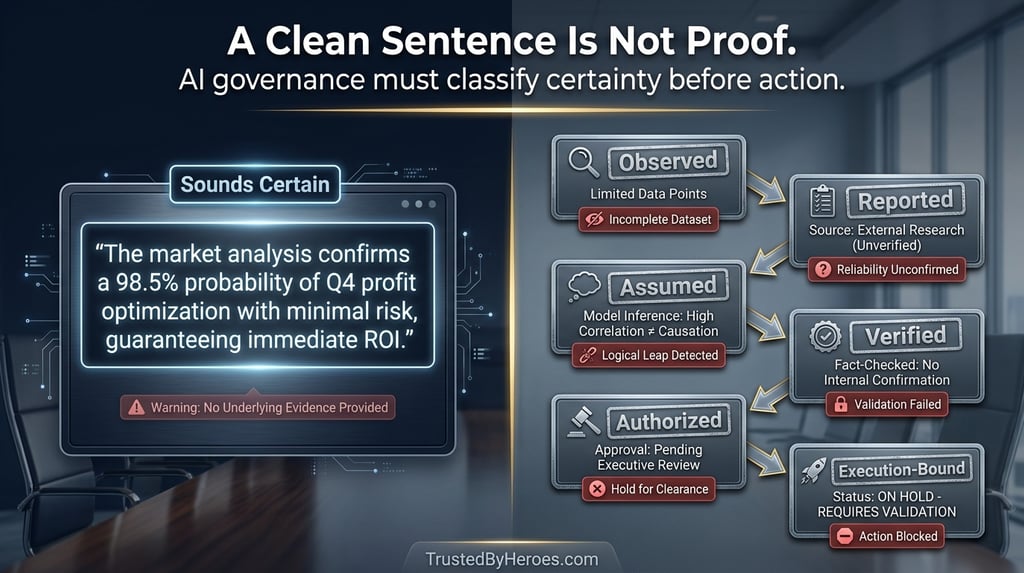

AI Makes the Old Problem Faster

The problem Richard raised is not new.

AI did not create it.

AI accelerates it.

AI can write a sentence that looks final, balanced, professional, and confident.

That does not mean it is verified.

That does not mean it is current.

That does not mean it is sourced properly.

That does not mean the person using it understands the underlying certainty.

That does not mean anyone had authority to act on it.

That is the danger.

AI can turn weak input into strong-looking language.

It can make an assumption sound like a conclusion.

It can make a summary sound like verification.

It can make a guess sound like a finding.

It can make borrowed information sound like knowledge.

I have seen enough human systems fail on bad assumptions to know what happens when a machine starts polishing those assumptions for us.

The sentence gets cleaner.

The risk gets harder to see.

“Human Reviewed” Is Not Enough

A lot of AI policy language leans on the phrase:

human reviewed

I understand why.

It sounds responsible.

But by itself, it is too thin.

I have been involved in enough reviews to know that “reviewed” can mean ten different things.

Reviewed for grammar.

Reviewed for tone.

Reviewed for policy.

Reviewed for legal risk.

Reviewed for technical accuracy.

Reviewed against source material.

Reviewed for authority.

Reviewed for execution.

Those are not the same.

A human can review an AI output and still miss the only issue that matters.

The issue may not be whether the sentence is well written.

The issue may be whether the sentence should be relied on at all.

That is where governance has to get sharper.

Not more complicated for the sake of it.

Sharper.

What kind of statement is this?

Observed?

Specified?

Reported?

Inferred?

Assumed?

AI-generated?

Human-verified?

Approved for limited use?

Approved for execution?

Blocked until verified?

That kind of classification matters.

Because in the real world, when something goes wrong, people do not just ask whether there was a document.

They ask what the document proves.

I Have Seen “Probably Right” Become the Problem

Most failures I have seen do not start with someone announcing they are about to make a reckless decision.

They start much quieter.

A number gets carried forward.

A report gets trusted because it looks official.

A meeting note becomes a decision record.

A draft becomes final.

A person assumes someone else checked it.

A statement gets repeated enough times that everyone starts treating it like verified knowledge.

Then something goes wrong.

And suddenly everyone is trying to reconstruct the path:

Who knew? Who checked? Who assumed? Who approved? Who had authority? What changed? Why did it proceed?

That is the part AI governance needs to address.

Not just whether AI was used.

That is only the beginning.

The deeper issue is whether the organization can prove the chain from source to statement to review to authority to execution.

That is where many systems are weak.

They can show activity.

They cannot show admissible reasoning.

The DHITL Lesson

This is also why I believe in Distributed Human-in-the-Loop governance.

But not as a buzzword.

Not as “more humans touched it.”

That does not solve the problem.

I have seen groups reinforce bad assumptions.

A room full of smart people can still carry the same stale fact if nobody owns the duty to recheck it.

DHITL only works if the roles are clear.

One person verifies the source.

One person checks the policy boundary.

One person checks legal or professional risk.

One person confirms operational authority.

One person confirms the condition still exists at the moment of execution.

That is useful.

That is not committee theatre.

That is distributed discipline.

The point is not how many people approved it.

The point is what each person actually verified.

That is the lesson Richard’s example points toward.

An engineer knows the difference between:

I measured it.

and:

The drawing says it.

and:

The report says it.

AI governance needs the same discipline.

From Evidence to Execution

Evidence matters.

But I do not think evidence alone is enough anymore.

You can have records, logs, screenshots, approvals, and timestamps and still miss the core issue.

Should the action have been allowed to occur?

That is the execution question.

A decision may have been valid when formed.

The condition may change before action.

The authority may expire.

The source may be updated.

The risk may shift.

The record may still exist, but the right to act on it may no longer be there.

That is why I use the language of admissible execution.

Not just:

Can we prove something happened?

But:

Can we prove it was allowed to happen when it became effect-capable?

That is the difference.

And AI makes that difference more important because AI shortens the distance between statement and action.

What I Took From Richard’s Point

What I took from Richard Randall’s point is this:

The danger is not only that people disagree.

The danger is that people agree too quickly because they think the words mean the same thing.

That happens in law.

It happens in engineering.

It happens in business.

It happens in leadership.

It happens in operations.

And now it happens with AI.

AI can sit in the middle and produce language that everyone accepts because it sounds right.

But sounding right is not governance.

A clean sentence is not a verified fact.

A reviewed output is not automatically an authorized action.

A record is not automatically evidence that survives challenge.

The discipline has to be built before the moment of consequence.

What did we know?

What did we assume?

What did we verify?

What did someone else report?

What did AI generate?

Who had authority to rely on it?

Was it still valid when action occurred?

That is the work.

Not panic.

Not hype.

Not policy theatre.

Just disciplined governance built around the way real systems actually fail.

My Take Away

I have seen this pattern enough times to respect it.

People do not usually get into trouble because they had no information.

They get into trouble because they misunderstood the status of the information they had.

AI will make that easier to do at speed.

That is why the future of AI governance is not just better prompts, better tools, or better policies.

It is better classification of certainty.

Observed.

Specified.

Reported.

Inferred.

Assumed.

Verified.

Authorized.

Execution-bound.

Blocked.

That is the difference between a sentence that sounds right and a decision that can survive being tested.

Bob McTaggart | Veteran-led AI Governance & Trust Infrastructure |

#ai #riskmanagement #trustedbyheroes #supportourheroesdirectory #redfridaytalkshelp

Supporting

Getting Veterans and First Responders back on mission.!

Veteran-inspired AI Governance & Trust Infrastructure

Trusted by Heroes and Mounted Rifles Management

Veterans and First Responders receive direct support through SupportOurHeroes.Directory

Leadership and peer support are taught through RedFridayTalks.Help

The same governance protections are available to everyone.

© 2026. All rights reserved.