Why “Do-It-Yourself” AI Governance With Screenshots And Word Files Is Dangerous Executive Summary

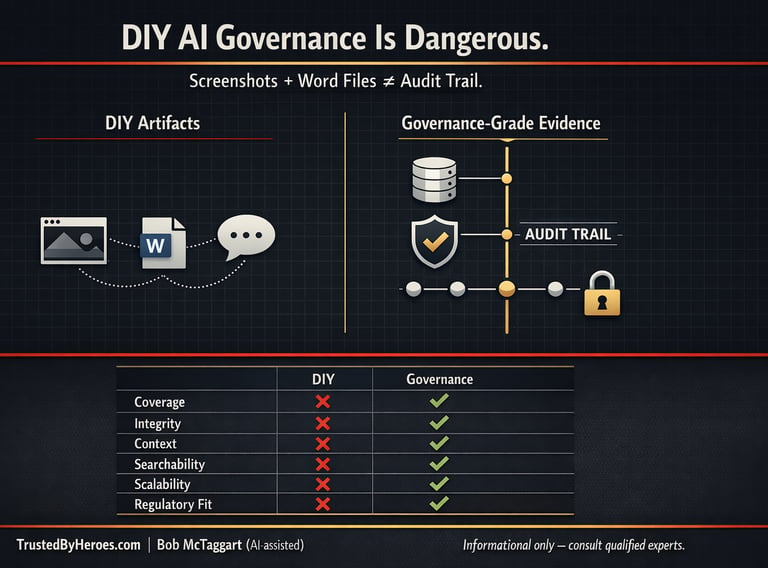

Treating AI governance as a DIY paperwork exercise—screenshots of AI decisions, scattered Word files claiming “human in the loop,” and ad-hoc notes in email or chat—is not just weak process. It is a liability amplifier.

Bob McTaggart edited with AI

1/25/20262 min read

Why “Do-It-Yourself” AI Governance With Screenshots And Word Files Is Dangerous

Executive Summary

Treating AI governance as a DIY paperwork exercise—screenshots of AI decisions, scattered Word files claiming “human in the loop,” and ad-hoc notes in email or chat—is not just weak process. It is a liability amplifier.

Modern governance frameworks and regulators are converging on a standard of continuous, systematized, auditable controls across the full AI lifecycle. DIY approaches fail to meet those expectations and expose organizations to regulatory, litigation, and reputational risk.

This report explains why.

1. What DIY AI Governance Looks Like

Common patterns include:

• Screenshots pasted into internal systems

• Word or Google Docs with manual “approval” notes

• Emails or chat messages documenting agreement with AI outputs

• Informal memos saying “keep a human in the loop”

These create the appearance of governance without engineered controls.

2. What Serious AI Governance Requires

Across NIST AI RMF, ISO/IEC 42001, and the EU AI Act, expectations include:

• AI system inventories and risk classification

• Lifecycle documentation

• Defined accountability and HITL design

• Continuous, system-generated audit logs

• Risk and impact assessments

• Policy aligned to real workflows

The unifying requirement is structured, system-level traceability.

3. Why Screenshots And Word Files Are Structurally Unfit

DIY artifacts fail on:

• Coverage (selective, incomplete)

• Integrity (easy to edit, no chain-of-custody)

• Context (missing model, prompt, and data lineage)

• Searchability (manual vs queryable logs)

• Scalability

• Regulatory alignment

They solve optics, not accountability.

4. Discovery, Evidence, and E-Discovery Risk

Courts increasingly treat AI records as standard electronic evidence.

DIY documentation creates dual exposure:

• Failure to preserve = spoliation risk

• Inconsistent artifacts = narrative of sloppy governance

Automated audit trails at least demonstrate a consistent governance posture.

5. Sector-Specific Failure Scenarios

Healthcare: inability to prove clinical oversight

Finance: exposure to discrimination and fair-lending claims

Employment: weak defenses in bias and HR litigation

Critical infrastructure: inability to prove guardrails and accountability

DIY governance becomes indefensible in high-impact environments.

6. Litigation And Liability Allocation

Primary responsibility rests with the deployer and operator.

Advisors may face professional liability, but:

• Duty of care is not delegable

• Contracts often limit advisor liability

• Courts expect benchmarking to recognized frameworks

• Operators remain accountable for implementation choices

Relying on DIY governance weakens defenses against both regulators and plaintiffs.

7. Strategic Takeaways

Leaders should:

• Treat AI governance as engineered control, not paperwork

• Assume AI records are discoverable

• Implement automated audit trails

• Engineer HITL with structured escalation and logging

• Align with recognized frameworks

DIY governance does not meet modern expectations.

Legal Disclaimer:

This content is for informational purposes only and does not constitute legal, regulatory, or professional advice. Organizations should consult qualified legal, compliance, and technical professionals regarding their specific circumstances.

#ai #riskmanagement #trustedbyheroes

Supporting

Getting Veterans and First Responders back on mission.!

Veteran-inspired AI Governance & Trust Infrastructure

Trusted by Heroes and Mounted Rifles Management

Veterans and First Responders receive direct support through SupportOurHeroes.Directory

Leadership and peer support are taught through RedFridayTalks.Help

The same governance protections are available to everyone.

© 2026. All rights reserved.